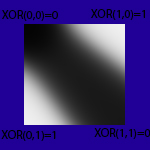

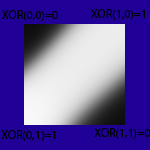

En haut à gauche, une image en niveau de gris de la proression de l'apprentissage: noir=0, blanc=1.

Basiquement, il apprend ici la fonction logique XOR:

0/0=>0

1/0=>1

0/1=>1

1/1=>0

donc coins gauche/haut et bas/droit noir, blanc les 2 autres coins.

Créer une Dll nommée "NeuroNetLib"

Code : Tout sélectionner

;create a new neural net with nblayer deep

CompilerIf #PB_Compiler_Processor=#PB_Processor_x86

#S=4

CompilerElse

#S=8

CompilerEndIf

#SD=8

#TypeInput=0

#TypeSigmoid=1

#TypeSwish1=2

#TypeSoftMax=3

#TypeLeakyRelu=4

#TypeTanH=5

#real=0

#positive=1

ProcedureDLL CreateNet()

*net=AllocateMemory(#S)

If *net=0:MessageRequester("Error","Allocate memory failed"):EndIf

PokeI(*net,0)

;*net => nblayer

;*net+#S*1 => pointer to inputs layer (layer n°0)

;*net+#S*2 => pointer To the second layer etc... (layer n°1)

ProcedureReturn *net

EndProcedure

ProcedureDLL EndLayer(*net)

nblayer.i=PeekI(*net)

*Layer=PeekI(*net+#S*nblayer)

NbNeuron.i=PeekI(*layer)

type.i=PeekI(*layer+#S*7)

*PLayer=PeekI(*net+#S*(nblayer-1))

NbNeuronPrevOutput.i=PeekI(*PLayer)

target=#SD*NbNeuron

output=#SD*NbNeuron

error=#SD*NbNeuron

weight=#SD*NbNeuron*NbNeuronPrevOutput

bias=#SD*NbNeuron

som=#SD*NbNeuron

*layer=ReAllocateMemory(*layer,#S*8+output+weight+bias+error+target+som)

If *layer=0:MessageRequester("Error","ReAllocate memory failed"):EndIf

*startBias=*layer+#S*8+weight

*startOutput=*layer+#S*8+weight+bias

*startError=*layer+#S*8+weight+bias+output

*startTarget=*layer+#S*8+weight+bias+output+error

*startSom=*layer+#S*8+weight+bias+output+error+target

PokeI(*layer+#S*0,NbNeuron)

PokeI(*layer+#S*1,NbNeuronPrevOutput)

PokeI(*layer+#S*2,*startBias)

PokeI(*layer+#S*3,*startOutput)

PokeI(*layer+#S*4,*startError)

PokeI(*layer+#S*5,*startTarget)

PokeI(*layer+#S*6,*startSom)

PokeI(*layer+#S*7,type)

PokeI(*net+#S*nblayer,*layer)

ProcedureReturn 1

EndProcedure

;specify composition of each layer

ProcedureDLL AddLayer(*net,NbNeuron.i,type.i)

nblayer.i=PeekI(*net)

nblayer+1

PokeI(*net,nblayer)

*net=ReAllocateMemory(*net,#S+#S*nblayer)

If *net=0:MessageRequester("Error","Allocate memory failed"):EndIf

If type=#TypeInput

*layer=AllocateMemory(#S*8+#SD*NbNeuron)

If *layer=0:MessageRequester("Error","Allocate memory failed (input)"):EndIf

PokeI(*net+#S*nblayer,*layer)

nbprevneuron.i=0

*startBias=0

*startOutput=*layer+#S*8

*startTarget=0

*startSom=0

PokeI(*layer+#S*0,NbNeuron)

PokeI(*layer+#S*1,nbprevneuron) ;nb neuron previous layer

PokeI(*layer+#S*2,*startBias)

PokeI(*layer+#S*3,*startOutput)

PokeI(*layer+#S*4,*startError)

PokeI(*layer+#S*5,*startTarget)

PokeI(*layer+#S*6,*startSom)

PokeI(*layer+#S*7,type)

ProcedureReturn *net

EndIf

*PLayer=PeekI(*net+#S*(nblayer-1))

NbNeuronPrevOutput.i=PeekI(*PLayer)

output=#SD*NbNeuron

error=#SD*NbNeuron

weight=#SD*NbNeuron*NbNeuronPrevOutput

bias=#SD*NbNeuron

som=#SD*NbNeuron

*layer=AllocateMemory(#S*8+output+weight+bias+error+target+som)

If *layer=0:MessageRequester("Error","Allocate memory failed"):EndIf

*startBias=*layer+#S*8+weight

*startOutput=*layer+#S*8+weight+bias

*startError=*layer+#S*8+weight+bias+output

*startTarget=*layer+#S*8+weight+bias+output+error

*startSom=*layer+#S*8+weight+bias+output+error+target

PokeI(*layer,NbNeuron)

PokeI(*layer+#S,NbNeuronPrevOutput)

PokeI(*layer+#S*2,*startBias)

PokeI(*layer+#S*3,*startOutput)

PokeI(*layer+#S*4,*startError)

PokeI(*layer+#S*5,*startTarget)

PokeI(*layer+#S*6,*startSom)

PokeI(*layer+#S*7,type)

PokeI(*net+#S*nblayer,*layer)

ProcedureReturn *net

EndProcedure

;initiation weights With randoms values

ProcedureDLL InitWeight(*net,RandomType.i)

nblayer.i=PeekI(*net)

For i=2 To nblayer

*layer=PeekI(*net+#S*i)

nbneuron=PeekI(*layer)

nbprevoutput=PeekI(*layer+#S)

*weight=*layer+#S*8

*bias=PeekI(*layer+#S*2)

;change randomly weights

For t=0 To nbneuron*nbprevoutput-1

If RandomType=0

Repeat

a.d=(Random(200)-100)/100

Until a<>0.0

ElseIf Randomtype=1

Repeat

a.d=Random(100)/100

Until a<>0.0

EndIf

PokeD(*weight+#SD*t,a) ;write random weight between -1;+1

Next t

;change randomly bias' weights

For t=0 To nbneuron-1

If RandomType=0

Repeat

a.d=(Random(200)-100)/100

Until a<>0.0

ElseIf Randomtype=1

Repeat

a.d=Random(100)/100

Until a<>0.0

EndIf

PokeD(*bias+#SD*t,a) ;write random weight between -1;+1

Next t

Next i

EndProcedure

ProcedureDLL ReadNbNeuron(*net,layer.i)

*layer=PeekI(*net+#S*layer)

ProcedureReturn PeekI(*layer)

EndProcedure

ProcedureDLL ReadPrevNbNeuron(*net,layer.i)

*layer=PeekI(*net+#S*layer)

ProcedureReturn PeekI(*layer+#S)

EndProcedure

ProcedureDLL.d ReadOutput(*net,layer.i,neuron.i)

*layer=PeekI(*net+#S*layer)

*startoutput=PeekI(*layer+#S*3)

neuron-1

neuron*#SD

*startoutput=*startoutput+neuron

value.d=PeekD(*startoutput)

ProcedureReturn value

EndProcedure

ProcedureDLL.d ReadSomme(*net,layer.i,neuron.i)

*Layer=PeekI(*net+#S*layer)

*StartSom=PeekI(*Layer+#S*6)

neuron-1

neuron*#SD

som.d=PeekD(*StartSom+neuron)

ProcedureReturn som

EndProcedure

ProcedureDLL.d ReadBiasWeight(*net,layer.i,neuron.i)

*Layer=PeekI(*net+#S*layer)

*StartBias=PeekI(*Layer+#S*2)

neuron-1

neuron*#SD

*StartBias+neuron

bias.d=PeekD(*StartBias)*1

ProcedureReturn bias

EndProcedure

ProcedureDLL.d ReadError(*net,layer.i,neuron.i)

*layer=PeekI(*net+#S*layer)

*startError=PeekI(*layer+#S*4)

neuron-1

neuron*#SD

*startError+neuron

value.d=PeekD(*startError)

ProcedureReturn value

EndProcedure

ProcedureDLL.d ReadTarget(*net,neuron.i)

nblayer.i=PeekI(*net)

*layer=PeekI(*net+#S*nblayer)

*startTarget=PeekI(*layer+#S*5)

neuron-1

neuron*#SD

*startTarget+neuron

value.d=PeekD(*startTarget)

ProcedureReturn value

EndProcedure

ProcedureDLL.d ReadWeight(*net,layer.i,neuron.i,weight.i)

*layer=PeekI(*net+#S*layer)

nbPrevouput.i=PeekI(*layer+#S)

*startWeight=*layer+#S*8

weight-1

weight*#SD

neuron-1

neuron*nbPrevouput

neuron*#SD

*startWeight+neuron

*startWeight+weight

value.d=PeekD(*startWeight)

ProcedureReturn value

EndProcedure

ProcedureDLL DeleteNet(*net)

nblayer=PeekI(*net)

For i=1 To nblayer

*layer=PeekI(*net+#S*i)

FreeMemory(*layer)

Next i

FreeMemory(*net)

ProcedureReturn 1

EndProcedure

ProcedureDLL SetWeight(*net,layer.i,neuron.i,weight.i,value.d)

*layer=PeekI(*net+#S*layer)

nbPrevouput.i=PeekI(*layer+#S)

*startWeight=*layer+#S*8

weight-1

weight*#SD

neuron-1

neuron*nbPrevouput

neuron*#SD

*startWeight+neuron

*startWeight+weight

PokeD(*startWeight,value)

EndProcedure

;activation functions https://en.wikipedia.org/wiki/Activation_function

;here, Sigmoïd :

Procedure.d Sigmoid(output.d)

output.d=1/(1+Exp(-output))

ProcedureReturn output

EndProcedure

;here, Swish1 (Beta=1) :

Procedure.d Swish1(output.d)

output.d=output/(1+Exp(-output))

ProcedureReturn output

EndProcedure

;here, LeakyReLu :

Procedure.d LeakyReLu(output.d)

If output<0

output*0.01

EndIf

ProcedureReturn output

EndProcedure

;define inputs

ProcedureDLL SetInput(*net,Input.i,value.d)

*layer=PeekI(*net+#S)

*StartOutput=PeekI(*layer+#S*3)

input-1

input*#SD

*StartOutput+Input

PokeD(*StartOutput,value)

ProcedureReturn 1

EndProcedure

ProcedureDLL.d SetBiasWeight(*net,layer.i,neuron.i,bias.d)

*Layer=PeekI(*net+#S*layer)

*StartBias=PeekI(*Layer+#S*2)

neuron-1

neuron*#SD

*StartBias+neuron

PokeD(*StartBias,bias)

EndProcedure

;define desired output

ProcedureDLL SetDesireOutput(*net,Output.i,value.d)

nblayer.i=PeekI(*net)

*layer=PeekI(*net+#S*nblayer)

*startTarget=PeekI(*layer+#S*5)

output-1

output*#SD

*startTarget+Output

PokeD(*startTarget,value)

ProcedureReturn 1

EndProcedure

ProcedureDLL.d CalculError(*net)

;*net => nblayer

;*net+#S*1 => pointer to layer 1=> inputs

;*net+#S*2 => pointer to layer 2

;*net+#S*3 => pointer To the third layer etc...

nblayer=PeekI(*net)

;calcul erreurs sur la couche des sorties

*layer=PeekI(*net+#S*nblayer)

nbneuron=PeekI(*layer)

*startOutput=PeekI(*layer+#S*3)

*startError=PeekI(*layer+#S*4)

*startTarget=PeekI(*layer+#S*5)

For i=0 To nbneuron-1

; target.d=PeekD(*startTarget+i*#SD)

; output.d=PeekD(*startOutput+i*#SD)

; error.d=(1-target)/(1-output)

; error=(target/output)+error

; error=-error

error.d=PeekD(*startTarget+i*#SD)-PeekD(*startOutput+i*#SD)

PokeD(*startError+#SD*i,error)

Next i

;calcul erreurs des couches cachées

For i=nblayer To 3 Step -1

*layer=PeekI(*net+#S*i)

nbneuron=PeekI(*layer)

*startOutput=PeekI(*layer+#S*3)

*startError=PeekI(*layer+#S*4)

*startTarget=PeekI(*layer+#S*5)

*startWeight=*Layer+#S*8

*Player=PeekI(*net+#S*(i-1))

Pnbneuron=PeekI(*Player)

*PstartError=PeekI(*Player+#S*4)

For j=0 To Pnbneuron-1

Terror.d=0

For t=0 To nbneuron-1

Error.d=PeekD(*startError+t*#SD)

Weight.d=PeekD(*startWeight+j*#SD)

Terror=Terror+Error*weight

Next t

PokeD(*PstartError+j*#SD,Terror)

Next j

Next i

;cross entropy

; Error=0

; For i=0 To nbneuron-1

; target.d=PeekD(*startTarget+i*#SD)

; output.d=PeekD(*startOutput+i*#SD)

; Error=(target*Log(output))+((1-target)*Log(1-output))+Error

; Next i

; Error=-Error

; ProcedureReturn Error

EndProcedure

;calculate outputs

ProcedureDLL Propagate(*net)

nblayer.i=PeekI(*net)

For i=2 To nblayer

*Layer=PeekI(*net+#S*i)

nbneuron.i=PeekI(*Layer)

nbprevoutput.i=PeekI(*Layer+#S)

*StartBias=PeekI(*Layer+#S*2)

*StartOutput=PeekI(*Layer+#S*3)

*StartSom=PeekI(*layer+#S*6)

type.i=PeekI(*Layer+#S*7)

*StartWeight=*Layer+#S*8

*PLayer=PeekI(*net+#S*(i-1))

*PStartOutput=PeekI(*PLayer+#S*3)

;SoftMax

If type=#TypeSoftMax

;calcul les sommes de chaque neurone

somsom.d=0

For j=0 To nbneuron-1

output.d=0.0

For k=0 To nbprevoutput-1

input.d=PeekD(*PStartOutput+k*#SD)

weight.d=PeekD(*StartWeight+k*#SD+j*nbprevoutput*#SD)

output=output+weight*input

Next k

bias.d=PeekD(*StartBias+j*#SD)*1

output=output+bias

PokeD(*StartSom+j*#SD,output)

;calcul la sortie théorique de chaque neurone

output=Exp(output)

PokeD(*StartOutput+j*#SD,output)

somsom=somsom+output

Next j

For j=0 To nbneuron-1

output=PeekD(*StartOutput+j*#SD)

output=output/somsom

PokeD(*StartOutput+j*#SD,output)

Next j

Continue

EndIf

;Sigmoid/Swish1/LeakyReLu/TanH

For j=0 To nbneuron-1

output.d=0.0

For k=0 To nbprevoutput-1

input.d=PeekD(*PStartOutput+k*#SD)

weight.d=PeekD(*StartWeight+k*#SD+j*nbprevoutput*#SD)

output=output+weight*input

Next k

bias.d=PeekD(*StartBias+j*#SD)*1

output=output+bias

PokeD(*StartSom+j*#SD,output)

If type=#TypeSigmoid

PokeD(*StartOutput+j*#SD,Sigmoid(output))

ElseIf type=#TypeSwish1

PokeD(*StartOutput+j*#SD,Swish1(output))

ElseIf type=#TypeLeakyReLu

PokeD(*StartOutput+j*#SD,LeakyReLu(output))

ElseIf type=#TypeTanH

PokeD(*StartOutput+j*#SD,TanH(output))

EndIf

Next j

Next i

EndProcedure

ProcedureDLL GradientDescent(*net,LearningRate.d,drop_rate.i)

nblayer=PeekI(*net)

For i=nblayer To 2 Step -1

*layer=PeekI(*net+#S*i)

nbneuron=PeekI(*layer)

*startBias=PeekI(*Layer+#S*2)

*startOutput=PeekI(*layer+#S*3)

*startError=PeekI(*layer+#S*4)

*startSom=PeekI(*layer+#S*6)

type.i=PeekI(*layer+#S*7)

*startWeight=*Layer+#S*8

*Player=PeekI(*net+#S*(i-1))

Pnbneuron=PeekI(*Player)

*PstartOutput=PeekI(*Player+#S*3)

*PstartError=PeekI(*Player+#S*4)

If type=#TypeSoftMax

somExp.d=0

For j=0 To nbneuron-1

som.d=PeekD(*startSom+j*#SD)

somExp.d=somExp+Exp(som)

Next j

EndIf

For j=0 To nbneuron-1

If i<nblayer And Random(100)<drop_rate:Continue:EndIf

erreur.d=PeekD(*startError+j*#SD)

outputneuron.d=PeekD(*startOutput+j*#SD)

If type=#TypeSigmoid

formula.d=1-outputneuron ;(1-f(x))

formula=outputneuron*formula ;f'(x)=f(x) * (1-f(x))

ElseIf type=#TypeSwish1

som.d=PeekD(*startSom+j*#SD)

formula.d=1-outputneuron ;(1-f(x))

formula=outputneuron/som*formula ;(f(x)/x) * (1-f(x))

formula=outputneuron+formula ;f'(x) = f(x) + (f(x)/x) * (1-f(x))

ElseIf type=#TypeTanH

formula.d=1-(outputneuron*outputneuron)

ElseIf type=#TypeLeakyRelu

som.d=PeekD(*startSom+j*#SD)

If som<0.0

formula.d=0.01

Else

formula.d=1.0

EndIf

ElseIf type=#TypeSoftMax

som.d=PeekD(*startSom+j*#SD)

somsquare.d=somExp*somExp

som2.d=somExp-Exp(som)

formula.d=(som2*Exp(som))/somsquare

EndIf

For k=0 To Pnbneuron-1

prevoutput.d=PeekD(*PstartOutput+k*#SD)

weight.d=PeekD(*startWeight+j*#SD*Pnbneuron+k*#SD)

gradient.d=-erreur*formula*prevoutput*LearningRate

newWeight.d=weight-gradient

PokeD(*startWeight+j*#SD*Pnbneuron+k*#SD,newWeight)

Next k

gradient=-erreur*formula*LearningRate

bias.d=PeekD(*startBias+j*#SD)

newBias.d=bias-gradient

PokeD(*startBias+j*#SD,newBias)

Next j

Next i

EndProcedure

ProcedureDLL SaveNN(*net,name$)

nblayer.i=PeekI(*net)

CreateFile(0,name$)

WriteInteger(0,nblayer) ;nblayer

For i=1 To nblayer

*layer=PeekI(*net+#S*i)

nbneuron.i=PeekI(*layer)

WriteInteger(0,nbneuron) ;nb neuron

WriteInteger(0,PeekI(*layer+#S)) ;nb prev neuron

WriteInteger(0,PeekI(*layer+#S*7)) ;type

;if input layer, continue

nbneuron-1

If i=1:Continue:EndIf

*endSom=PeekI(*layer+#S*6)+nbneuron*#SD

*layer=*layer+#S*8

For j=*layer To *endsom Step #SD

WriteDouble(0,PeekD(j))

Next j

Next i

CloseFile(0)

EndProcedure

ProcedureDLL LoadNN(name$)

If ReadFile(0,name$)=0:ProcedureReturn 0:EndIf

nblayer.i=ReadInteger(0)

*net=CreateNet()

For i=1 To nblayer

nbneuron.i=ReadInteger(0)

nbprevneuron.i=ReadInteger(0)

type.i=ReadInteger(0)

*net=AddLayer(*net,nbneuron,type)

*layer=PeekI(*net+#S*i)

nbneuron-1

;if input layer, continue

If i=1:Continue:EndIf

*endSom=PeekI(*layer+#S*6)+nbneuron*#SD

*layer=*layer+#S*8

For j=*layer To *endsom Step #SD

PokeD(j,ReadDouble(0))

Next j

Next i

EndLayer(*net)

CloseFile(0)

ProcedureReturn *net

EndProcedure

Code : Tout sélectionner

;program To visualize the neural net

CompilerIf #PB_Compiler_Processor=#PB_Processor_x86

#S=4

CompilerElse

#S=8

CompilerEndIf

#SD=8

#TypeInput=0

#TypeSigmoid=1

#TypeSwish1=2

#TypeSoftMax=3

#TypeLeakyRelu=4

#TypeTanH=5

#real=0

#positive=1

If InitSprite() = 0 Or InitKeyboard() = 0 Or InitMouse() = 0 Or OpenWindow(0, 0, 0, 800, 600, "Neural Network Test", #PB_Window_SystemMenu | #PB_Window_ScreenCentered)=0 Or OpenWindowedScreen(WindowID(0),0,0,800,600,0,0,0,#PB_Screen_NoSynchronization )=0

MessageRequester("Error", "Can't open the sprite system", 0)

End

EndIf

Import "NeuroNetLib.lib"

CreateNet()

AddLayer(*net,NbNeuron.i,type.i)

InitWeight(*net,RandomType.i)

EndLayer(*net)

ReadOutput.d(*net,layer.i,neuron.i)

ReadSomme.d(*net,layer.i,neuron.i)

ReadBiasWeight.d(*net,layer.i,neuron.i)

ReadError.d(*net,layer.i,neuron.i)

ReadWeight.d(*net,layer.i,neuron.i,weight.i)

ReadTarget.d(*net,neuron.i)

ReadNbNeuron(*net,layer.i)

ReadPrevNbNeuron(*net,layer.i)

SetWeight(*net,layer.i,neuron.i,weight.i,value.d)

SetBiasWeight(*net,layer.i,neuron.i,bias.d)

DeleteNet(*net)

activation.d(output.d)

Propagate(*net)

SetInput(*net,Input.i,value.d)

SetDesireOutput(*net,Output.i,value.d)

CalculError.d(*net)

GradientDescent(*net,LearningRate.d,drop_rate.i)

LoadNN(name$)

SaveNN(*net,name$)

EndImport

Procedure testxor(*net,p1.i,p2.i)

nblayer=PeekI(*net)

SetInput(*net,1,p1)

SetInput(*net,2,p2)

Propagate(*net)

r.i=Round(ReadOutput(*net,nblayer,1),#PB_Round_Nearest)

sxor=#Red

If r=p1!p2

Sxor=#Green

EndIf

ProcedureReturn sxor

EndProcedure

Procedure testand(*net,p1.i,p2.i)

nblayer=PeekI(*net)

SetInput(*net,1,p1)

SetInput(*net,2,p2)

Propagate(*net)

r.i=Round(ReadOutput(*net,nblayer,2),#PB_Round_Nearest)

sand=#Red

If r=p1&p2

Sand=#Green

EndIf

ProcedureReturn sand

EndProcedure

Procedure testor(*net,p1.i,p2.i)

nblayer=PeekI(*net)

SetInput(*net,1,p1)

SetInput(*net,2,p2)

Propagate(*net)

r.i=Round(ReadOutput(*net,nblayer,3),#PB_Round_Nearest)

sor=#Red

If r=p1|p2

Sor=#Green

EndIf

ProcedureReturn sor

EndProcedure

;create neural network

#nbLayer=3

#nbInput=2

LearningRate.d=0.01

*net=CreateNet()

*net=AddLayer(*net,#nbInput,#TypeInput) ;layer 1

*net=AddLayer(*net,6,#TypeSwish1) ;layer 2

*net=AddLayer(*net,3,#TypeSigmoid) ;layer 3

EndLayer(*net)

InitWeight(*net,#real)

;test data

Structure dataset

input1.d

input2.d

result1.d

result2.d

result3.d

EndStructure

Dim dataset.dataset(11)

;XOR AND OR

For i=0 To 1

For j=0 To 1

dataset(a)\input1=i

dataset(a)\input2=j

bool.i=i!j

dataset(a)\result1=bool

bool.i=i&j

dataset(a)\result2=bool

bool.i=i|j

dataset(a)\result3=bool

a+1

Next j

Next i

Repeat

Repeat:Until WindowEvent()=0

FlipBuffers()

ClearScreen(#Black)

ExamineKeyboard()

;train the network

For u=1 To 10

RandomizeArray(dataset())

For ti=0 To 3

SetInput(*net,1,dataset(ti)\input1)

SetInput(*net,2,dataset(ti)\input2)

Propagate(*net)

SetDesireOutput(*net,1,dataset(ti)\result1) ;XOR

SetDesireOutput(*net,2,dataset(ti)\result2) ;AND

SetDesireOutput(*net,3,dataset(ti)\result3) ;OR

CalculError(*net)

GradientDescent(*net,LearningRate,0)

Next ti

Next u

StartDrawing(ScreenOutput())

;display inputs

y.i=100-(#nbInput<<1)*100

min.d=10000

a1=0:a2=0:a3=0

For i=1 To #nblayer

nbneuron.i=ReadNbNeuron(*net,i)

nbprevneuron.i=ReadPrevNbNeuron(*net,i)

y=200-(nbneuron>>1)*100

yy=200-(nbprevneuron>>1)*100

For j=1 To nbneuron

For t=1 To nbprevneuron

dx1=100+i*150

dy1=j*120+y-7+t

dx2=100+(i-1)*150

dy2=t*120+yy-7+t

dx3=(dx1-dx2)/3+dx2

dy3=(dy1-dy2)/3+dy2-10

LineXY(dx1,dy1,dx2,dy2)

DrawText(dx3,dy3,StrD(readWeight(*net,i,j,t),3))

Next t

Next j

Next i

y.i=200-(#nbInput>>1)*100

For i=1 To #nbInput

Circle(250,i*120+y,40)

Circle(250,i*120+y,38,#Black)

DrawText(234,i*120+y-7,StrD(readOutput(*net,1,i),3))

Next i

For i=2 To #nblayer

nbneuron=ReadNbNeuron(*net,i)

nbprevneuron=ReadprevNbNeuron(*net,i)

y.i=200-(nbneuron>>1)*100

yy.i=200-(nbprevneuron>>1)*100

For j=1 To nbneuron

Circle(100+i*150,j*120+y,40)

Circle(100+i*150,j*120+y,38,#Black)

DrawText(84+i*150,j*120+y-40,StrD(readOutput(*net,i,j),3),#Green)

If i=#nblayer

DrawText(84+i*150,j*120+y-20,StrD(readTarget(*net,j),3),#White)

EndIf

DrawText(84+i*150,j*120+y,StrD(readError(*net,i,j),3),#Red)

DrawText(84+i*150,j*120+y+20,StrD(readSomme(*net,i,j),3),#Yellow)

DrawText(84+i*150,j*120+y+40,StrD(ReadBiasWeight(*net,i,j),3),#Blue)

Next j

Next i

;display square

For i=0 To 100

For t=0 To 100

SetInput(*net,1,i/100)

SetInput(*net,2,t/100)

Propagate(*net)

color1.d=readOutput(*net,#nblayer,1)*255

color2.d=readOutput(*net,#nblayer,2)*255

color3.d=readOutput(*net,#nblayer,3)*255

Plot(600+i,t+170,RGB(color1,color1,color1))

Plot(600+i,t+300,RGB(color2,color2,color2))

Plot(600+i,t+440,RGB(color3,color3,color3))

Next t

Next i

DrawingMode(#PB_2DDrawing_Transparent)

DrawText(600,170,"0,0=0",testxor(*net,0,0))

DrawText(665,170,"1,0=1",testxor(*net,1,0))

DrawText(600,80+170,"0,1=1",testxor(*net,0,1))

DrawText(665,80+170,"1,1=0",testxor(*net,1,1))

DrawText(640,40+170,"XOR",#White)

DrawText(600,300,"0,0=0",testand(*net,0,0))

DrawText(665,300,"1,0=0",testand(*net,1,0))

DrawText(600,80+300,"0,1=0",testand(*net,0,1))

DrawText(665,80+300,"1,1=1",testand(*net,1,1))

DrawText(640,40+300,"AND",#White)

DrawText(600,440,"0,0=0",testor(*net,0,0))

DrawText(665,440,"1,0=1",testor(*net,1,0))

DrawText(600,80+440,"0,1=1",testor(*net,0,1))

DrawText(665,80+440,"1,1=1",testor(*net,1,1))

DrawText(640,40+440,"OR",#White)

StopDrawing()

Until KeyboardPushed(#PB_Key_Escape)